From Data to Diagnosis: Building Clinically Reliable AI Systems in Healthcare

Artificial Intelligence is reshaping healthcare — from medical imaging and diagnostics to predictive risk modeling and personalized treatment planning.

But healthcare AI is not just another machine learning deployment.

It operates in environments where every prediction can influence a clinical decision.

At JTheta, our work in AI in Healthcare and End-to-End AI Workflow Development is built around one principle:

AI in medicine must be clinically reliable — not just statistically impressive.

In healthcare, accuracy is not a benchmark.

It is a responsibility.

The difference between a 94% and 98% model may not be marginal improvement.

It may represent delayed diagnoses, misclassified conditions, or unequal patient outcomes.

So the real question is not:

“How do we build better models?”

It is:

“How do we build clinically dependable AI systems?”

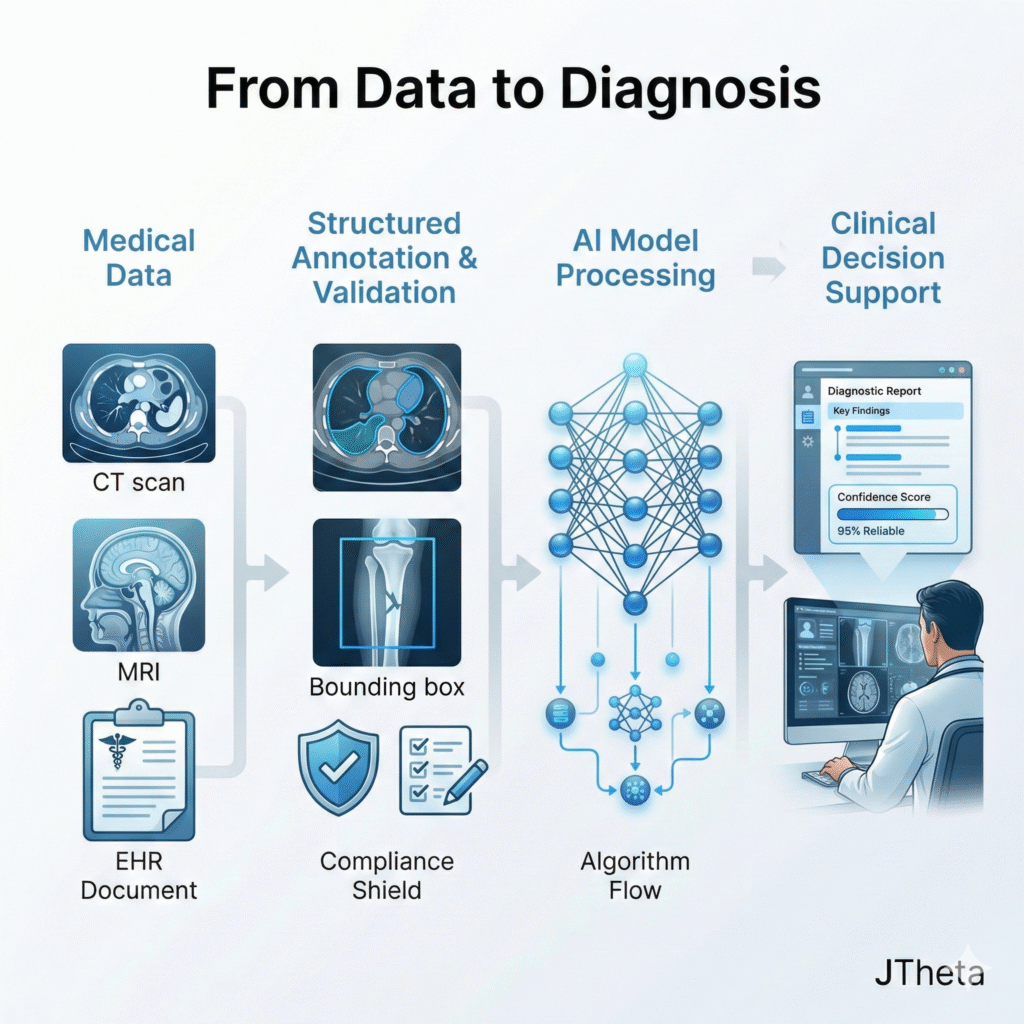

(I) Healthcare AI Is a Data Engineering Challenge First

Most healthcare AI initiatives emphasize:

- Model architecture

- Training pipelines

- Validation metrics

- Deployment frameworks

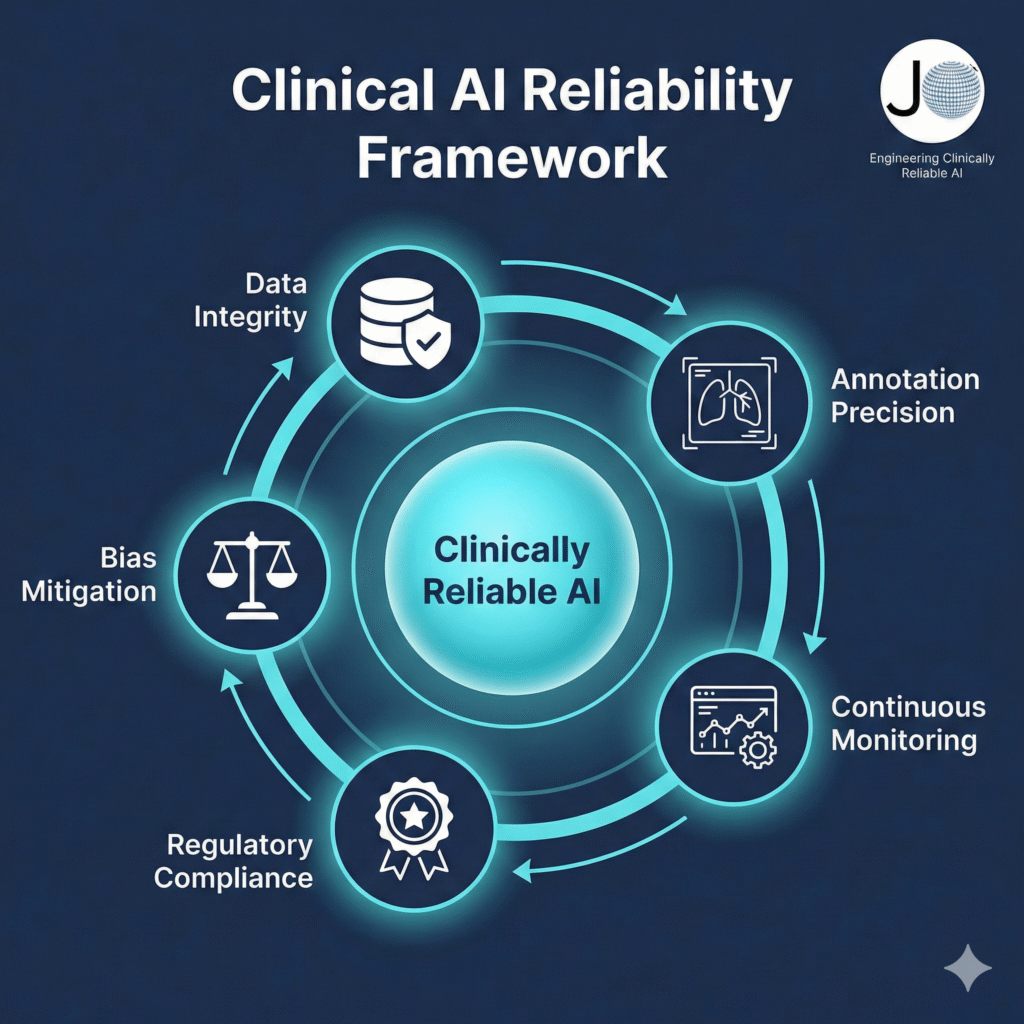

But clinical reliability depends on something deeper:

- Data integrity

- Annotation precision

- Bias mitigation

- Regulatory compliance

- Workflow alignment

A structured end-to-end workflow — from data acquisition and preprocessing to annotation governance and post-deployment monitoring — determines whether an AI system performs safely in real-world clinical environments.

If the training data contains inconsistencies, ambiguous labeling, or demographic imbalance, no architecture can compensate for those weaknesses.

In healthcare, noisy ground truth directly translates into diagnostic risk.

(II) The Core Challenges of AI in Healthcare

1️⃣ Heterogeneous, High-Stakes Medical Data

Healthcare datasets are inherently complex and multi-modal:

- Radiology images (CT, MRI, X-ray)

- Histopathology slides

- Electronic Health Records (EHR)

- Laboratory reports

- Wearable device streams

Each modality requires domain-specific preprocessing, annotation standards, and validation protocols.

For example, medical imaging initiatives often rely on structured General Image Annotation pipelines adapted specifically for anatomical precision and clinical context.

Generic data pipelines rarely meet medical-grade requirements.

2️⃣ Annotation Requires Clinical Governance

Healthcare annotation is not a generic labeling exercise.

It requires:

- Clinically trained professionals

- Structured diagnostic criteria

- Consensus-based labeling frameworks

- Measured inter-observer agreement

Consider tumor segmentation in radiology. Without strict boundary definitions and annotation guidelines, variability increases model uncertainty — and uncertainty increases clinical risk.

At JTheta, annotation is engineered as a governed data function — with ontology design, audit trails, and validation loops built into the process.

3️⃣ Regulatory & Compliance Imperatives

Healthcare AI must operate within strict regulatory ecosystems:

- HIPAA and patient privacy frameworks

- FDA or equivalent regulatory pathways

- Clinical validation and audit requirements

This demands:

- Full data lineage tracking

- Version-controlled datasets

- Transparent model explainability

Traceable annotation workflows

Compliance is not an afterthought — it must be architected into the AI lifecycle from day one through a structured End-to-End AI Governance Approach.

(III) What Clinically Reliable AI Actually Requires

Transitioning from research prototypes to production-grade clinical systems requires structural discipline.

✔ Structured Ontology Design

Clear definitions of disease classes, severity scales, and diagnostic thresholds.

Ambiguous labels create ambiguous predictions.

✔ Measured Inter-Annotator Agreement

Metrics such as:

- Cohen’s Kappa

- Fleiss’ Kappa

- Consensus-based validation

High variance in annotation signals weak ground truth — not model weakness.

✔ Bias Detection & Dataset Balancing

Healthcare datasets frequently underrepresent:

- Certain age demographics

- Ethnic populations

- Rare conditions

Unchecked bias leads to uneven model performance across patient groups.

In medicine, equity is mandatory — not optional.

✔ Continuous Monitoring & Lifecycle Governance

Healthcare environments evolve:

- Updated diagnostic criteria

- New disease variants

- Changes in imaging equipment

Static models degrade over time.

Continuous evaluation and lifecycle management prevent silent performance drift in live clinical environments.

(IV) From Accuracy to Clinical Trust

Healthcare professionals do not adopt AI because it performs well on benchmark datasets.

They adopt AI when it demonstrates:

- Consistency across patient populations

- Transparent decision logic

- Seamless workflow integration

- Clinical validation evidence

- Regulatory readiness

Trust — not novelty — drives adoption in healthcare systems.

(V) The Strategic Advantage in Healthcare AI

As foundational models become more accessible, differentiation will not come from parameter count.

It will come from:

- High-integrity medical datasets

- Governed annotation workflows

- Compliance-ready data infrastructure

- Human-in-the-loop validation frameworks

- End-to-end AI lifecycle management

Organizations that invest in structured data engineering and clinical collaboration build defensible, scalable healthcare AI systems.

(VI) Final Perspective

In healthcare, AI is not just software.

It is decision support in high-stakes clinical environments.

The path from data to diagnosis is not powered by model innovation alone — it is powered by disciplined data governance, medical collaboration, and uncompromising quality control.

That is what transforms AI from experimental technology into a trusted clinical asset.

(VII) Build Clinically Reliable AI with JTheta

If you’re developing AI systems for:

- Medical imaging

- Diagnostic support tools

- Predictive patient analytics

- Remote monitoring systems

- Healthcare data automation

JTheta can help you architect, annotate, validate, and deploy healthcare AI solutions built for clinical reliability — not just model performance.

🔎 What We Offer:

- Medical-grade data annotation workflows

- End-to-end AI lifecycle management

- Ontology engineering & governance

- Regulatory-aligned data infrastructure

Continuous monitoring frameworks

(VIII) Book a Demo

Explore how structured annotation and end-to-end AI workflow engineering can elevate your healthcare AI systems.

👉 Book a Demo with Our Experts

Let’s move from experimental AI to clinically dependable systems.