Not Just Boxes: The Hidden Intelligence Behind 3D Bounding Boxes in LiDAR

When people look at LiDAR annotations,

they often see simple boxes floating in space.

But here’s the reality:

A 3D bounding box is not just a box.

It’s a compressed representation of reality.

Every dimension, every angle, every coordinate—

encodes how a machine understands the physical world.

And if you get it wrong, your entire perception system feels it:

Misjudged distances

Incorrect motion prediction

Unstable tracking

It Starts with Dimensions — But It’s Not Just Size

At the core of every cuboid are three numbers:

Length (L) → front to back

Width (W) → side to side

Height (H) → bottom to top

On paper, this looks trivial.

In reality, this is where data quality begins—or breaks.

What Actually happens in the pipeline:

Slight overestimation → objects overlap → IoU drops

Underestimation → missing points → feature loss

Inconsistent sizing across frames → temporal instability

Models implicitly learn:

P(l,w,h∣class)P(l, w, h \mid class)P(l,w,h∣class)

So if your annotations are inconsistent,

your model learns a distorted version of reality.

Good annotation isn’t about fitting a box.

It’s about preserving real-world scale with statistical consistency.

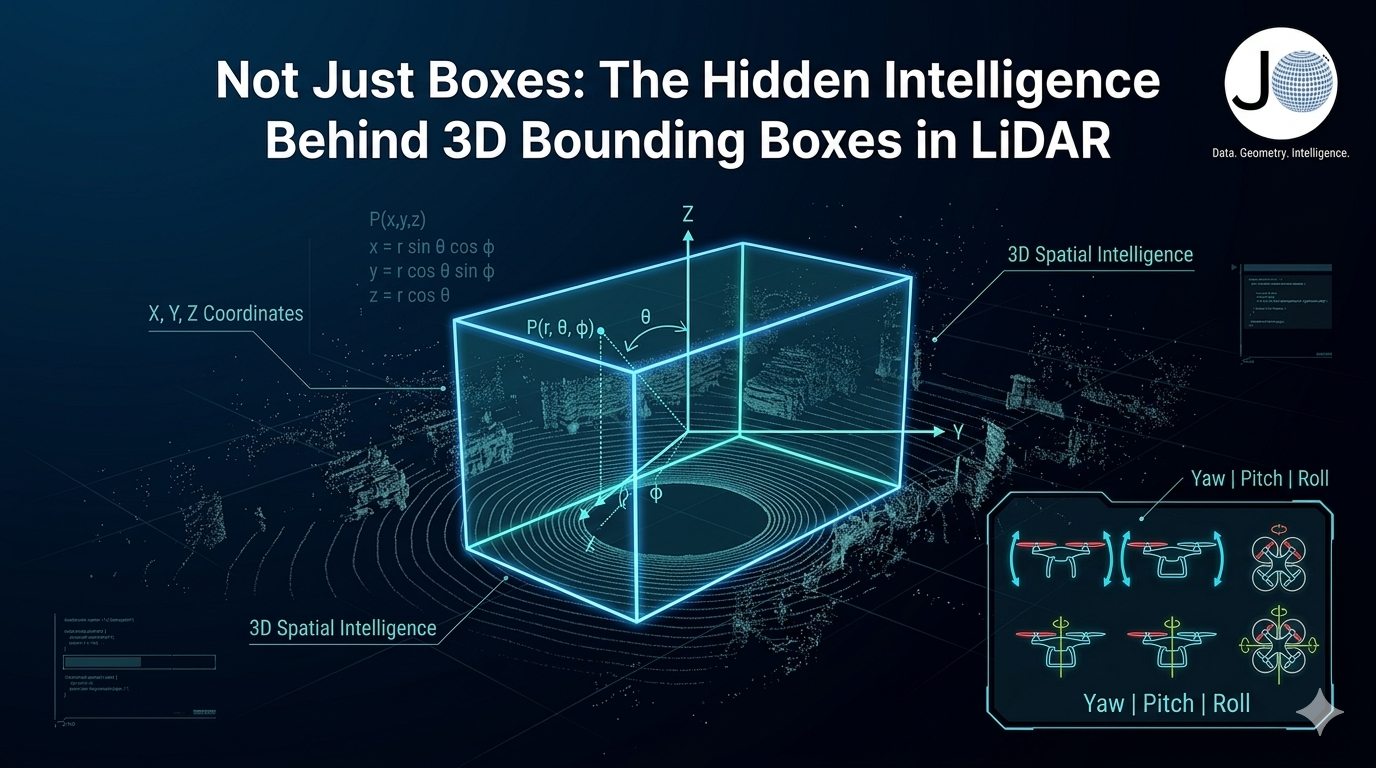

Orientation: Where Most Subtle Errors Hide

Rotation is where annotation becomes true intelligence.

Yaw (Z-axis rotation)

Defines heading direction

Critical for trajectory prediction

Even a small yaw error:

Breaks velocity estimation

Causes incorrect path forecasting

Mathematically:

θ=arctan2(vy,vx)

Pitch & Roll (Y and X axes)

Often ignored—but dangerous to overlook.

Pitch → slopes, ramps, elevation

Roll → tilted vehicles, fallen objects

Edge Cases:

Vehicles on inclined roads

Objects partially mounted on curbs

Off-road or construction environments

Ignoring rotation edge cases = training your model for a “perfect world” that doesn’t exist.

Position: The Anchor of Reality

Every cuboid exists through:

X → lateral displacement

Y → longitudinal distance

Z → vertical height

This is not just geometry—this is how the system perceives space.

Why precision matters:

A small shift in position leads to:

Incorrect depth estimation

Poor object association in tracking

Sensor fusion mismatches

Ground alignment constraint:

z≈zground+h/2

Violation leads to:

Floating objects

Sinking boxes

Broken scene understanding

Position defines spatial truth. Everything else depends on it.

Axis Alignment: The Silent QA Signal

Most annotators overlook this—but it’s critical for data validation pipelines.

is_axis_aligned = true → no rotation

is_axis_aligned = false → rotated object

Why it matters:

This simple flag enables:

Automated QA checks

Rotation anomaly detection

Dataset consistency analysis

At scale, this becomes a powerful signal for identifying systematic errors.

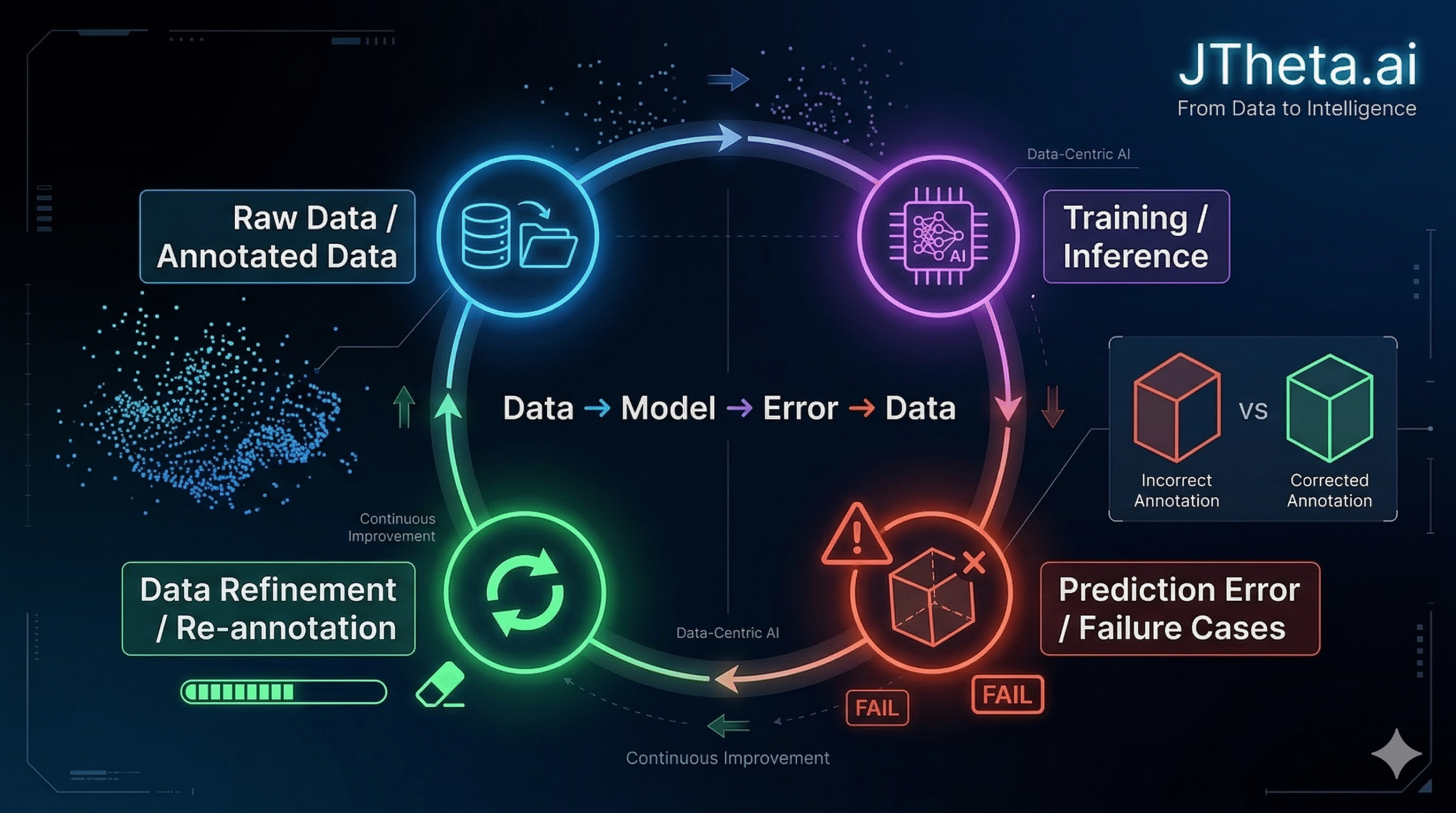

The Bigger Problem: Annotation ≠ Understanding

Here’s where most pipelines fail:

They treat annotation as a task to complete.

But in reality:

Annotation is a translation layer between reality and machine perception

If that translation is flawed:

Models learn incorrect priors

Edge cases remain invisible

Performance improvements plateau

The JTheta.ai Perspective

At JTheta.ai, we don’t just build annotation workflows—

we build data intelligence systems.

Our approach:

Context-aware annotation

→ Understanding object behavior, not just shapeGeometric & statistical validation

→ Ensuring consistency across datasetsClosed-loop learning

Because:

Better models don’t come from more data.

They come from better-understood data.

🔗 Explore JTheta.ai

🚀 Book a Demo:

https://www.jtheta.ai/book-a-demo/

💬 Join Our Discord Community (LiDAR & Perception Discussions): https://discord.com/invite/8RHFFpzZvA

🌐 About JTheta.ai

Building intelligent perception workflows, where data is not just labeled, but truly understood.