Why Physical AI Is Harder Than Digital AI And What Most Robotics Companies Get Wrong

Discover why physical AI is harder than digital AI and how weak data infrastructure causes robotics deployment failures. Learn what high-maturity teams do differently.

What Is Physical AI?

Physical AI refers to artificial intelligence systems that interact with and act within the real world through robotics, autonomous systems, and sensor-driven machines.

Unlike digital AI — which operates in structured software environments — physical AI must interpret dynamic physical environments in real time using sensor fusion, perception systems, and actuation pipelines.

Examples of physical AI systems include:

Autonomous vehicles

Warehouse robotics

Agricultural robotics

Industrial inspection robots

Defense and surveillance systems

The core distinction is this:

Digital AI processes data.

Physical AI must interpret space and act safely within it.

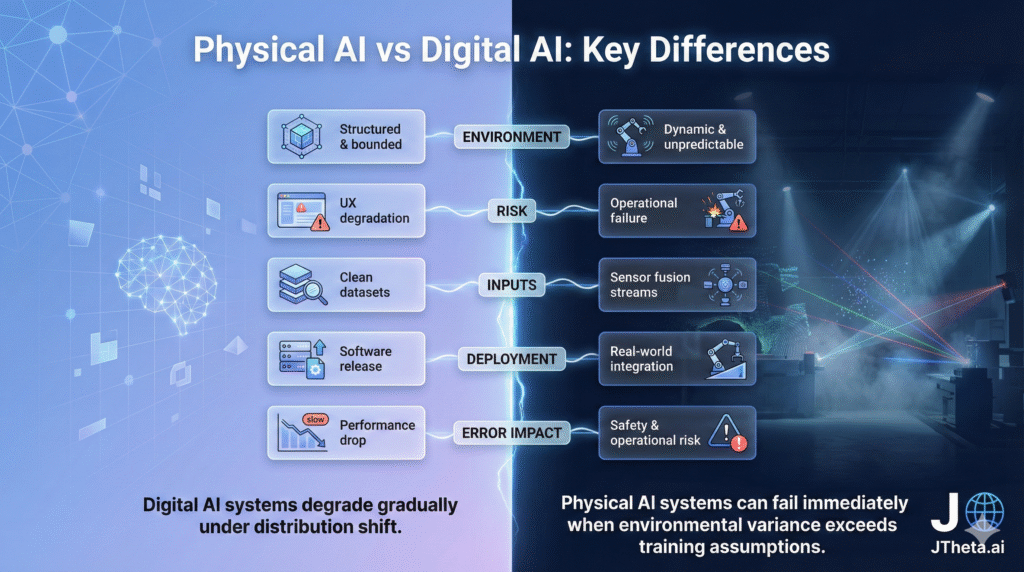

Physical AI vs Digital AI: Key Differences

Digital AI systems degrade gradually under distribution shift.

Physical AI systems can fail immediately when environmental variance exceeds training assumptions.

Why Physical AI Is Harder Than Digital AI

1. Unbounded Environments

Digital AI operates on structured datasets — text, images, transactions.

Physical AI operates in:

Changing lighting conditions

Weather interference

Occlusions

Surface reflections

Mechanical vibration

The real world is not version-controlled.

2. Sensor Fusion Complexity

Robotics AI depends on multiple modalities:

LiDAR (depth mapping)

Camera (classification & segmentation)

Radar (velocity detection)

IMU (motion compensation)

Each sensor introduces noise and calibration risk.

Misalignment across modalities compounds perception errors — impacting object detection, obstacle tracking, and spatial reasoning.

👉 Learn more: https://www.jtheta.ai/geospatial-satellite-imaging/

3. Real-Time Actuation Risk

Digital AI produces recommendations. Physical AI produces actions. A model error in digital AI may reduce engagement metrics.

A perception error in robotics can:

Trigger emergency stops

Cause navigation instability

Halt production environments

This amplifies the cost of minor inaccuracies.

4. Annotation Precision in 3D Systems

3D perception systems rely heavily on:

LiDAR data annotation

3D bounding box alignment

Semantic segmentation consistency

Spatial labeling accuracy

Even minor annotation inconsistencies create cascading training instability.

Without structured QA and schema governance, model reproducibility degrades.

👉 Related Reading:

https://www.jtheta.ai/3d-point-cloud-lidar-automation-platform/

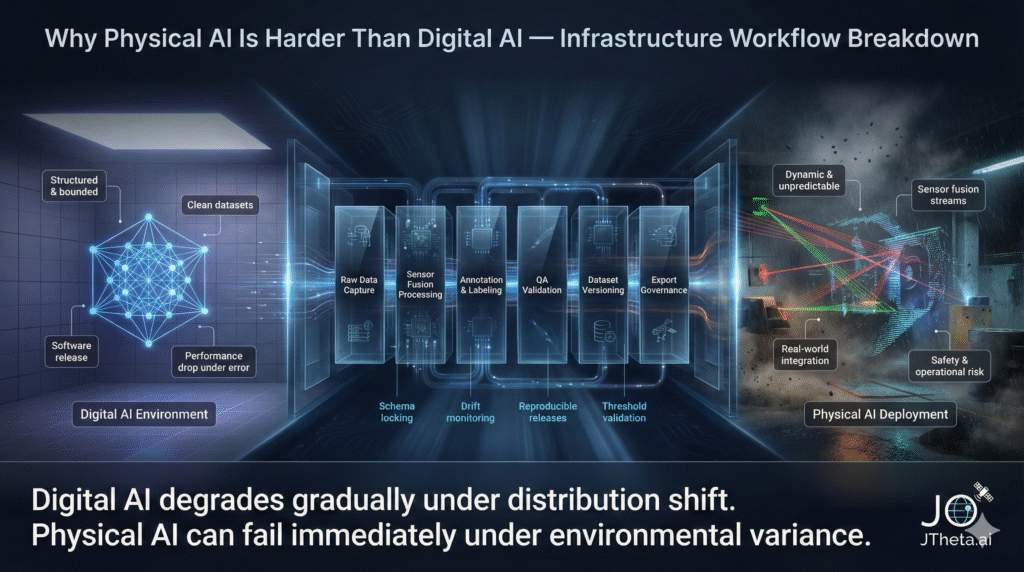

The Hidden Problem: Weak Robotics Data Infrastructure

Most robotics companies focus on:

Model architecture

Simulation environments

Compute optimization

Few prioritize:

Dataset version control

Annotation schema locking

QA thresholds

Export governance

Metadata validation

This leads to:

Dataset drift

Irreproducible experiments

Silent performance degradation

Deployment instability

Robotics AI failures often originate in the data layer — not the neural network.

Simulation vs Real-World Deployment

Simulation accelerates iteration.

However, synthetic environments rarely replicate:

Sensor vibration noise

Environmental dust

Reflective surfaces

Rain and fog interference

Teams that rely heavily on simulation without real-world data validation encounter deployment gaps.

High-performing robotics organizations treat simulation as augmentation — not replacement.

What High-Maturity Robotics Teams Do Differently

Successful physical AI teams implement:

1.Dataset Versioning

Every training cycle references a frozen, reproducible dataset release.

2. Annotation Governance

Taxonomy is locked before scaling operations.

3. Structured QA Thresholds

Annotation validation is metric-based, not subjective.

4. Export Validation Pipelines

No ad-hoc dataset handoffs.

5. Drift Monitoring

Environmental variance is continuously tracked.

👉 Explore:

https://www.jtheta.ai/advanced-analytics-dashboards/ https://www.jtheta.ai/lidar-annotation-3d-perception-platform/

Why Small Data Errors Cause Large Robotics Failures

In digital AI, a 2% error might reduce CTR.

In robotics AI, a 2% spatial misalignment can:

Misjudge obstacle distance

Disrupt path planning

Trigger unnecessary system overrides

Physical AI amplifies error because:

Decisions are spatial

Outputs are actuated

Feedback loops are immediate

Infrastructure maturity determines tolerance margin.

How to Evaluate Your Robotics AI Infrastructure

Ask:

Are datasets version-controlled?

Is annotation taxonomy documented?

Are QA thresholds measurable?

Is export reproducible?

Can experiments be traced to specific dataset versions?

If not, your physical AI system may be structurally fragile.

Book a Demo: Evaluate Your AI Data Infrastructure

If your robotics system depends on:

High-precision LiDAR annotation

Structured dataset lifecycle management

Reproducible training workflows

Scalable QA frameworks

It may be time to assess infrastructure maturity.

👉 Book a Demo:

https://jtheta.ai/book-a-demo