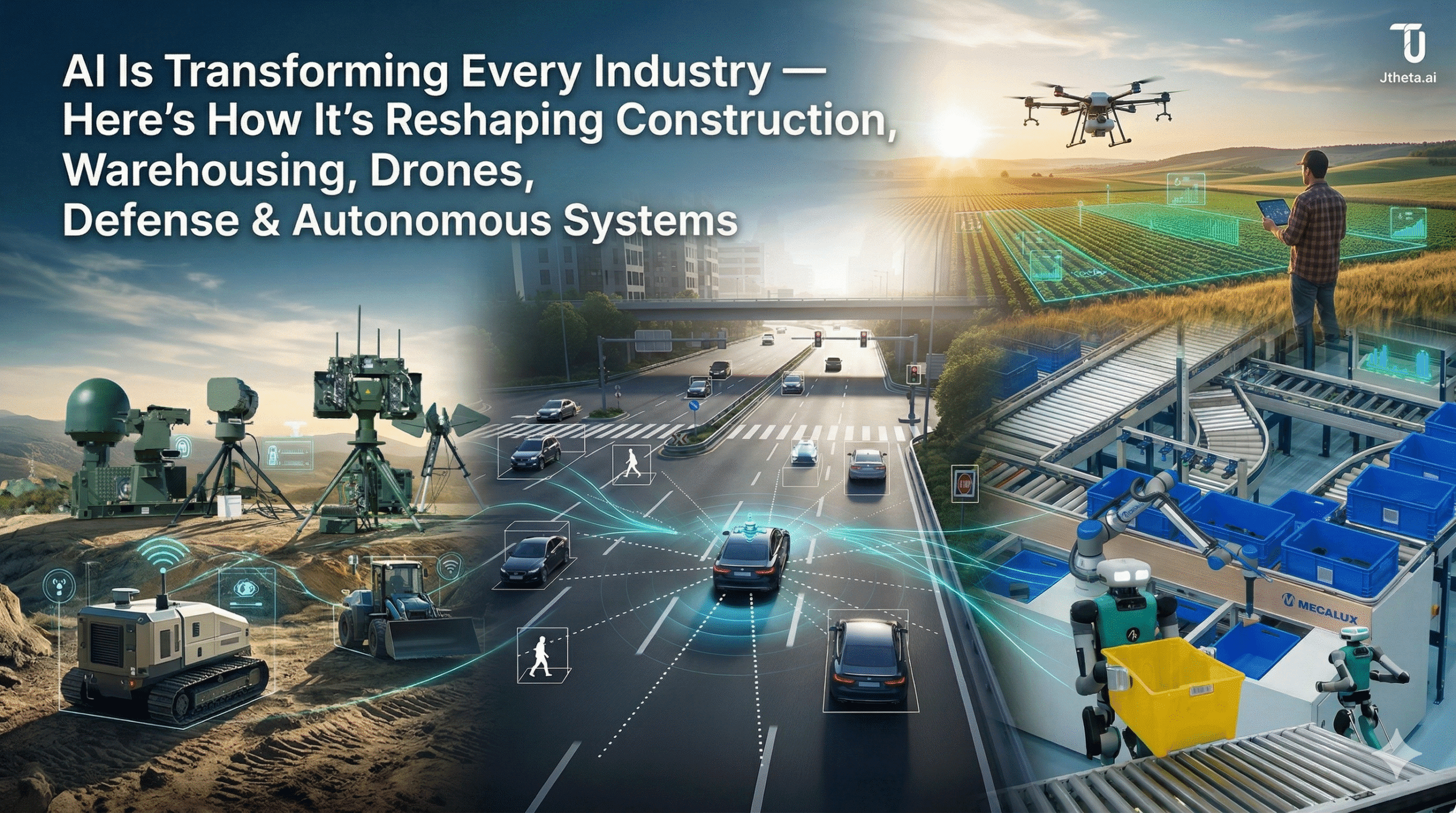

AI across industries: how intelligent systems are reshaping the real world

From construction sites to autonomous vehicles, AI is no longer a prototype — it’s the backbone of next-generation infrastructure. Here’s where it’s making the biggest impact, and why the data behind it matters most.

Artificial intelligence has crossed a threshold. It’s no longer confined to research labs or carefully controlled demos — it’s actively operating in some of the most complex, unstructured, and high-stakes environments humans have built. Construction sites. Fulfillment warehouses. Drone fleets. Defense networks. Autonomous vehicles rolling through city streets.

And behind every one of these applications, there’s a common engine quietly powering it all: high-quality, scalable data.

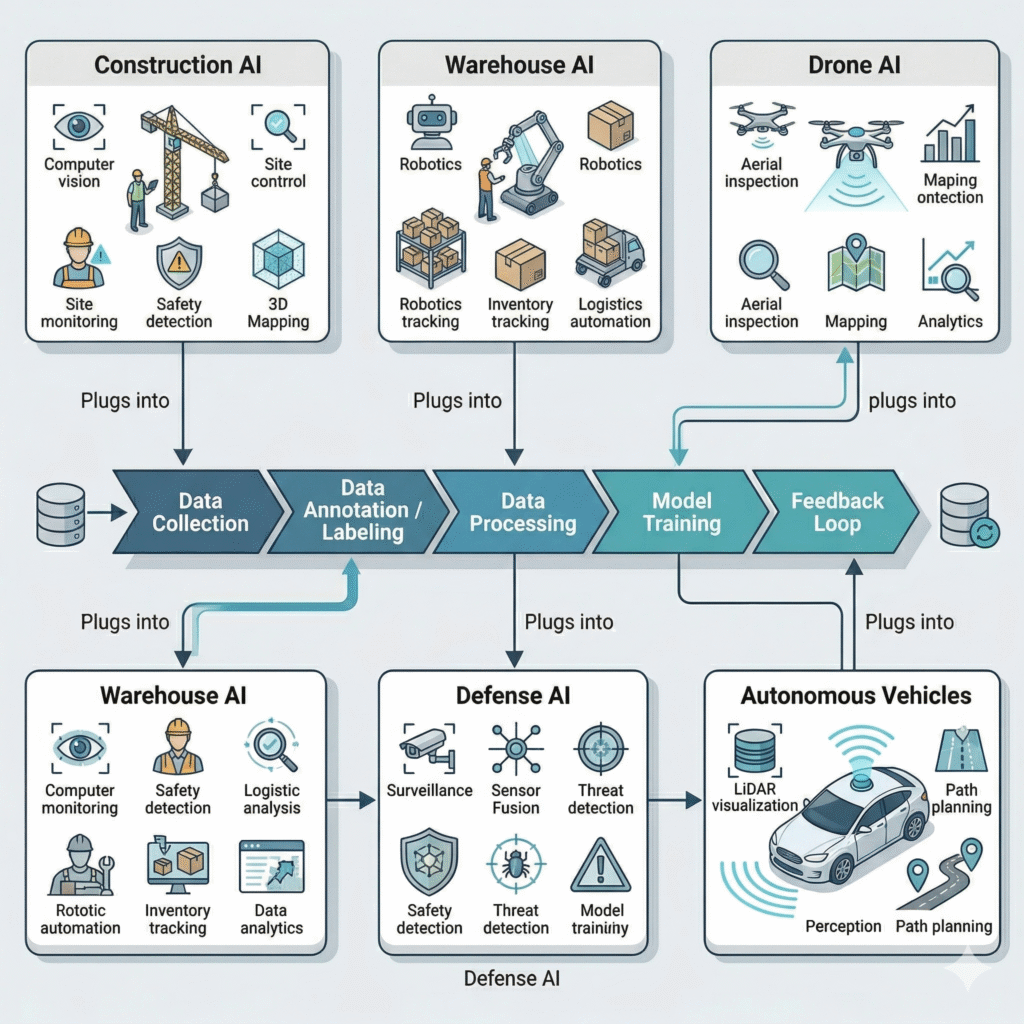

🏗️ AI in Construction: Building Smarter, Safer Sites

Construction is one of the most complex and unstructured environments humans operate in — and AI is finally bringing the visibility and control it desperately needs.

For decades, construction has been plagued by delays, cost overruns, and safety incidents. The root cause is almost always the same: decisions made on incomplete, outdated, or siloed information. AI changes that equation entirely.

📦 AI in Warehousing: Powering Intelligent Logistics

Automated picking and sorting systems now handle fulfillment tasks that once required entire teams. Vision-based inventory tracking — using cameras and sensors rather than manual counts — gives operators near real-time visibility into stock levels across massive facilities. Demand forecasting models analyze purchasing patterns, seasonal trends, and supply chain signals to predict what’s needed before it runs out.

The result: faster order fulfillment, dramatically reduced operational costs, and inventory accuracy that simply wasn’t possible before.

But warehousing AI runs on sensor data — and that data needs to be clean, structured, and labeled at scale. Route optimization models need historical movement data. Picking robots need annotated visual datasets to recognize thousands of SKUs. Forecasting systems need structured sales and supply chain records going back years.

The warehouses winning today aren’t just deploying robots. They’re building the data infrastructure that makes those robots reliable.

🚁 AI in Drone Systems: Intelligence from the Sky

Drones have been around for years. But drones combined with AI are something fundamentally different — they’re becoming autonomous sensing platforms capable of understanding the world they fly through.

Infrastructure inspection is one of the clearest use cases. Inspecting bridges, pipelines, and transmission towers used to mean sending humans into dangerous, hard-to-reach locations. AI-equipped drones now fly those routes autonomously, capturing high-resolution imagery and flagging anomalies — cracks, corrosion, structural deformation — with computer vision models trained on thousands of labeled examples.

Aerial mapping and surveying have similarly been transformed. What once took weeks of manual effort can now be completed in hours, with drones generating precise topographic data that feeds directly into planning and engineering workflows.

🛡️ AI in Defense: Enhancing Situational Awareness

Defense systems generate data at a scale and speed that no human team can fully process. Radar signals, satellite imagery, sensor feeds, communications intercepts — the volume is staggering. AI doesn’t just help manage that volume. It transforms it into actionable intelligence.

Surveillance and threat detection systems now use AI to monitor environments continuously, identifying patterns and anomalies that would take human analysts hours to surface. Sensor fusion — combining inputs from radar, cameras, acoustic sensors, and other sources into a unified operational picture — gives commanders clarity that was previously impossible.

🚗 AI in Autonomous Vehicles: Driving the Future

Autonomous vehicles represent the most ambitious and technically demanding application of AI in the physical world. They combine perception, reasoning, prediction, and control — all in real time, at highway speed, with human lives on the line.

The perception stack alone is extraordinarily complex. LiDAR generates dense 3D point clouds of the environment. Radar tracks objects through rain, fog, and darkness. Cameras capture the visual scene with the resolution needed for reading signs, detecting pedestrians, and interpreting road markings. Fusing all three into a coherent, real-time model of the world — sensor fusion — is one of the hardest problems in applied AI.

On top of perception sits decision-making: path planning algorithms that calculate safe trajectories through dynamic environments, predict the behavior of other road users, and make split-second calls in edge cases. Advanced Driver Assistance Systems (ADAS) bring many of these capabilities to vehicles on the road today — automatic emergency braking, lane-keeping, adaptive cruise control — as stepping stones toward full autonomy.

Join the community building this

We’re bringing together AI builders, data practitioners, and industry specialists who are working on exactly these challenges — not in theory, but in production. Whether you’re annotating drone imagery, designing data workflows for autonomous systems, or trying to figure out where to start, this is where those conversations happen.

Discord Link: https://discord.gg/your-link-here

Book a Demo: https://www.jtheta.ai/book-a-demo/